TL,DR; the economics says Manchester.

Channel 4 is moving 300 staff out of London, with most going to a major British city. (1) The HQ2 shortlist has just been announced, and seven city-regions are on it: Bristol, Cardiff, Glasgow, Leeds, Liverpool, Greater Manchester and the West Midlands. The winner will be announced on 1 October.

So, who should win? And what effects could the move have on the local economy? Is it really a big deal?

Full disclosure: I work at Birmingham University. I also work at the What Works Centre for Local Economic Growth. So these are my own views.

*

Economists have a simple answer to the first question: look for the biggest cluster of TV/radio/media activity outside of London, and move Channel 4 there.Why? Because adding hundreds of jobs to an existing hotspot should have catalytic effects beyond the direct transfer of those jobs.

Those catalytic effects are driven by what economists call agglomeration economies — the matching, sharing and learning effects that help workers and firms become more innovative and productive, and which depend on proximity and face-to-face interaction. (There may also be downsides to big moves like this. I’ll come back to those below.)For media firms, benefits might arise from local contracting and sub-contracting; staff moves; networking; as well as the critical mass and diversity of ideas and industry expertise.

The wider economy could gain too, through multiplier effects: high-paid media workers may help support jobs in local services, for example. US evidence suggest the tech multiplier is as much as 4.9 extra local jobs per tech job; but this UK study, albeit post-crisis, find a much lower number, 0.9 for each tech job.

*

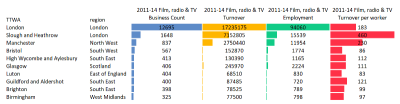

Which media cluster is the biggest? The data is unambiguous. As Tom Forth shows using NESTA data (original report here), on the UK mainland, Greater Manchester has by far the largest cluster of TV/radio/film activity outside of mega-London. If it cares about economic impact, Channel 4 should put HQ2 in Manchester.

But is this C4’s only criterion? No. The move aims to improve Channel 4’s country-wide fit and programming, as well as boost the winner’s local economy. Now, it’s not clear to me how to decide ‘fit’ objectively. And lots of other local factors will also be in play as C4 bosses do their fact-finding tours: the property deal, the buzz, and the persuasiveness of local leaders, especially in cities with Mayors.

Alternatively, if C4 wants to rebalance media activity more evenly, it should put HQ2 in either Birmingham or Coventry, the least media-intensive places on the shortlist. These would also win for travel time to London, which may be more of a factor than we think. It’s notable the Birmingham is widelyconsidered the frontrunner.

C4 is already trying to move bits of itself to three different places, so I wouldn’t rule out the HQ2 move being part of that pattern. But from an economist’s point of view, this is not the right approach: it risks having no catalytic effects anywhere.

*

That brings us to the other big question. How big could the C4 effect be?Here we have some idea of what to expect. Tom has done a diff-in-diff analysis of the BBC’s move to MediaCity, and finds significant rises in film/radio/TV industry productivity (revenue/worker) in Greater Manchester.

More recently, the Centre for Cities found 1400 additional creative industries jobs appeared in Salford on top of the 2600 BBC jobs, but little effect on the wider (non-creative) economy.

Notably, CFC showed much of the new creative jobs in Salford came from elsewhere in GM. I’m not sure that’s actually a bad thing, if it suggests that firms are physically clustering closer to MediaCity. We know that knowledge spillovers die away with distance, and these decay effects are especially large in the creative industries.

(The What Works Centre is doing its own impact analysis using the synthetic control approach. We hope to have results available soon.)

*

The apparently weak wider effects found by CFC may be explained by potential negative impacts of a move. Here are some things the winning city needs to watch out for.

First, large public sector moves might — in theory — lead to some crowding-out of the private sector, via changes to local wages (if C4 pays better than local incumbents) and/or prices (via higher property costs).

Second, we don’t know how far the BBC actually changed its procurement and contracting patterns after the move, or more importantly, whether staff spend enough locally to have a direct effect on supporting other jobs. (Channel 4 should track its own spend, and consider surveying staff.)

Third, any multiplier effects could be washed out by other changes in the city-region economy. In the MediaCity case, that could be the post-crash downturn or austerity.

*

All of this gives us an idea of what to expect from Channel 4’s move. It should also moderate our expectations.

For one thing, C4 is shifting far fewer positions than the BBC (300 vs. 2000+ jobs), and not all of the 300 will go to the same place. It’s big for C4 (nearly 40% of its staff) but tiny vs. the Beeb’s move.

Second, there is a case for pessimism. As Tom says here, if the move is this small in the aggregate, should C4 even bother? Well … even if the whole of C4 left London, this would still involve fewer people than the slice of the BBC that moved to MediaCity. For me, this makes the agglomeration story even more important — HQ2 will have the biggest catalytic effect if it goes to the biggest existing cluster, that is, Manchester. (In technical terms, leveraging existing agglomeration economies is most likely to give non-linear impacts.)

Third, we need to know more about what kind of jobs are moving. As Bec says, the roles that relocate matter a lot, especially as they help determine the level of interaction with other local media firms.The final thing to mention is that C4’s organisational culture will also need to shift. Commissioning the same London-based programme-makers from some new building outside the capital is more than pointless. Generating real benefits requires behaviour change, as well as location change.

***

(1) Bill points out that this is a GB study, not UK. Northern Irish cities don’t feature in the HQ2 shortlist. That said, Belfast is on the shortlist for one of the two planned ‘creative hubs’ outside London, as are Brighton, Newcastle-Gateshead, Nottingham, Sheffield and Stoke-on-Trent.